Machine Culture

Levin Brinkmann, Fabian Baumann, Jean-François Bonnefon, Maxime Derex, Thomas F. Müller, Anne-Marie Nussberger, Agnieszka Czaplicka, Alberto Acerbi, Thomas L. Griffiths, Joseph Henrich, Joel Z. Leibo, Richard McElreath, Pierre-Yves Oudeyer, Jonathan Stray, Iyad Rahwan

Max Planck Institute for Human Development, Center for Humans & Machines, Lentzeallee 94, Berlin 14195, Germany

Toulouse School of Economics, Esplanade de l’Université, 31080 Toulouse Cedex 06, France

Department of Sociology and Social Research, University of Trento, Italy

Institute for Advanced Study in Toulouse, Esplanade de l’Université, 31080 Toulouse Cedex 06, France

Department of Psychology & Department of Computer Science, Princeton University, Princeton, NJ 08540

Department of Human Evolutionary Biology, Harvard University, 11 Divinity Ave, Cambridge, MA 02138, USA

DeepMind Technologies Ltd, London, United Kingdom

Max Planck Institute for Evolutionary Anthropology, Leipzig, Germany

Inria, Flowers team, Université de Bordeaux, France

Center for Human-Compatible Artificial Intelligence, University of California, Berkeley, USA

Abstract:

The ability of humans to create and disseminate culture is often credited as the single most important factor of our success as a species. In this Perspective, we explore the notion of ‘machine culture,’ culture mediated or generated by machines. We argue that intelligent machines simultaneously transform the cultural evolutionary processes of variation, transmission, and selection. Recommender algorithms are altering social learning dynamics. Chatbots are forming a new mode of cultural transmission, serving as cultural models. Furthermore, intelligent machines are evolving as contributors in generating cultural traits—from game strategies and visual art to scientific results. We provide a conceptual framework for studying the present and anticipated future impact of machines on cultural evolution, and present a research agenda for the study of machine culture.

Introduction

The ability of humans to create and disseminate culture is considered the single most important factor in our species’ dominance on earth

Cultural evolution exhibits key Darwinian properties. Culture is shown to exhibit variation, transmission, and selection, and evolves through the selective retention of cultural traits, as well as nonselective processes such as drift

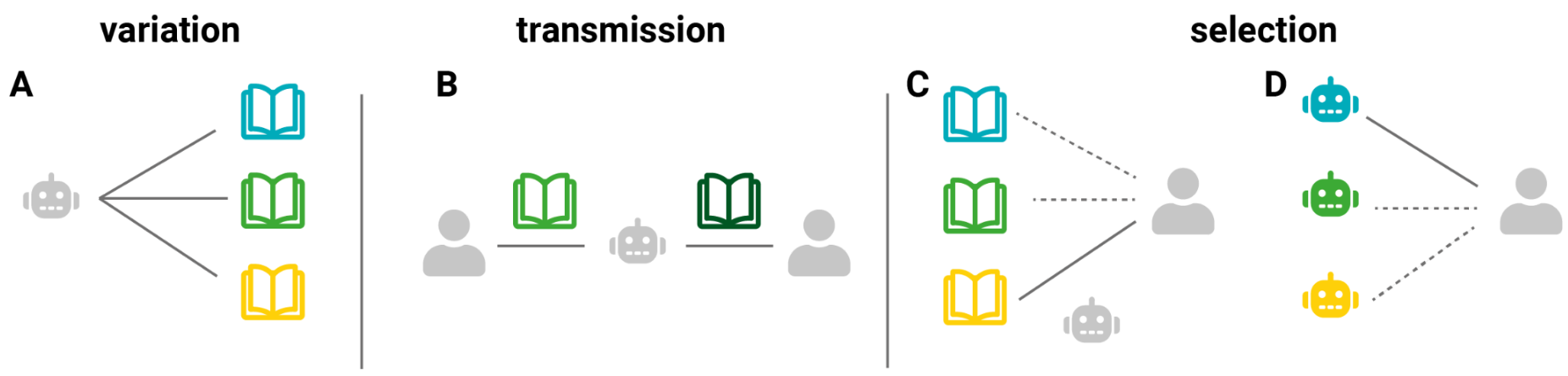

While new technologies have always affected the course of cultural evolution, in this article we argue that intelligent machines will exert a transformative influence on cultural evolution through their impact on all three Darwinian properties of culture: variation, transmission, and selection (Fig. 1). This process began in the early days of the Internet with machine-based content ranking by search engines and social media feed algorithms influencing what information people get from others. More recently, generative algorithms have begun participating in the creation of cultural traits themselves. We are not only observing a transformation of human culture but also its evolution into machine culture—culture mediated or generated by machines. This article aims to provide researchers across disciplines with a primer and a roadmap for navigating this monumental shift. As the impact of an increasingly digital society on cultural evolution has been explored elsewhere 1, we specifically focus on the current and potential impact of intelligent machines on cultural evolution. For the purposes of this article, we use the terms “intelligent machines” and “artificial intelligence (AI) systems” interchangeably, with AI referring to the science and technology that allow machines to perform tasks that typically require human intelligence such as perceiving the environment, planning and executing actions, and adapting by learning from data or experience 2.

Examples of machine-mediated cultural evolution

We begin by presenting empirical evidence of machine cultural evolution, setting the stage for a detailed exploration through a framework that discusses instances where machines mediate or generate cultural traits from a cultural evolutionary perspective. Through four pivotal examples, we illustrate the diverse ways intelligent machines are transforming cultural evolutionary dynamics. Generative machines, such as text-to-image algorithms, are contributing to the variety of cultural traits. Models drawing upon reinforcement learning are pushing humans onto novel ground, for instance in the ancient game of Go and beyond. Large language models (LLMs) are facilitating the transmission of cultural knowledge and redefining the value of human intellectual skills. Meanwhile, transmission pathways are rewired by recommender systems selecting what and from whom humans learn. At first glance, the examples provided might seem to pertain to vastly different technological areas, and to translate into a collection of unrelated effects. However, even now, machines are beginning to integrate features from a range of the outlined technologies—reinforcement learning, for instance, is enhancing generative AI. Furthermore, these technologies are operating on multiple levels; generative AI not only generates novel ideas but also offers recommendations for their refinement.

Box 1: Glossary

Culture: information capable of affecting individuals’ behaviors that are acquired from other individuals via social transmission

Cultural Evolution: the change of cultural information over time, the key properties for an evolutionary process are variation, transmission, and selection

Social Learning: learning that is influenced by the observation of another individual or their products.

AI: the science and technology enabling machines to perform tasks that typically require human intelligence such as perceiving the environment, planning and executing actions, and adapting by learning from data or experience.

Machines: intelligent machines. Used interchangeably with AI systems, thus referring to machines that may possess capabilities to perceive the environment, plan and execute actions, and adapt by learning from data or experience.

Variation: the existence of different cultural traits within a population. It represents the raw material on which other processes, like selection and transmission, operate. Humans and machines add to existing cultural variation through random and guided exploration, as well as recombination of existing cultural traits.

Transmission: the process by which cultural information, such as knowledge, behaviors, traditions, or practices, is passed from one individual to another through social learning mechanisms such as observation or teaching.

Selection: the process by which certain cultural traits, practices, or ideas become more or less prevalent within a population over time due to differential adoption.

Cultural recombination through generative AI

Generative AI has seen two major waves of innovation in recent years. The inception of Generative Adversarial Networks (GANs) by

These models can thus increase cultural variation by helping humans to produce new and relevant recombinations, which are sometimes recognized as works of art, sold at prestigious auction houses6. While recombination oftentimes forms the foundation of human creativity7, it is still debated how, or even if, machines can generate relevant content beyond the boundaries of human culture. Even simple latent representations can disentangle the semantic meaning of linguistic concepts8. Similarly, text-to-image models use language as a cognitive tool to disentangle and consequently recombine visual concepts9. However, these models build upon concepts harvested from human culture. As such, text-to-image models may be limited to the concepts defined and demonstrated by humans in the underlying dataset. However, generative AI models can produce novel “future art” by forecasting future art movements and by deliberately avoiding classification into established artistic movements—such creations have been evaluated as more creative than prestigious contemporary works by human artists10. When applied to engineering, similar methods can lead to the discovery of designs that are both novel and superior11.

Generative AI exemplifies the dual nature of machines as both cultural artifacts and creators thereof. On the one hand, generative algorithms are increasingly presented and, to some extent, recognized as authors of art6. Simultaneously, machines are subjected to cultural processes of comparison, distribution, modification, and eventual abandonment.

Cultural innovation through reinforcement learning

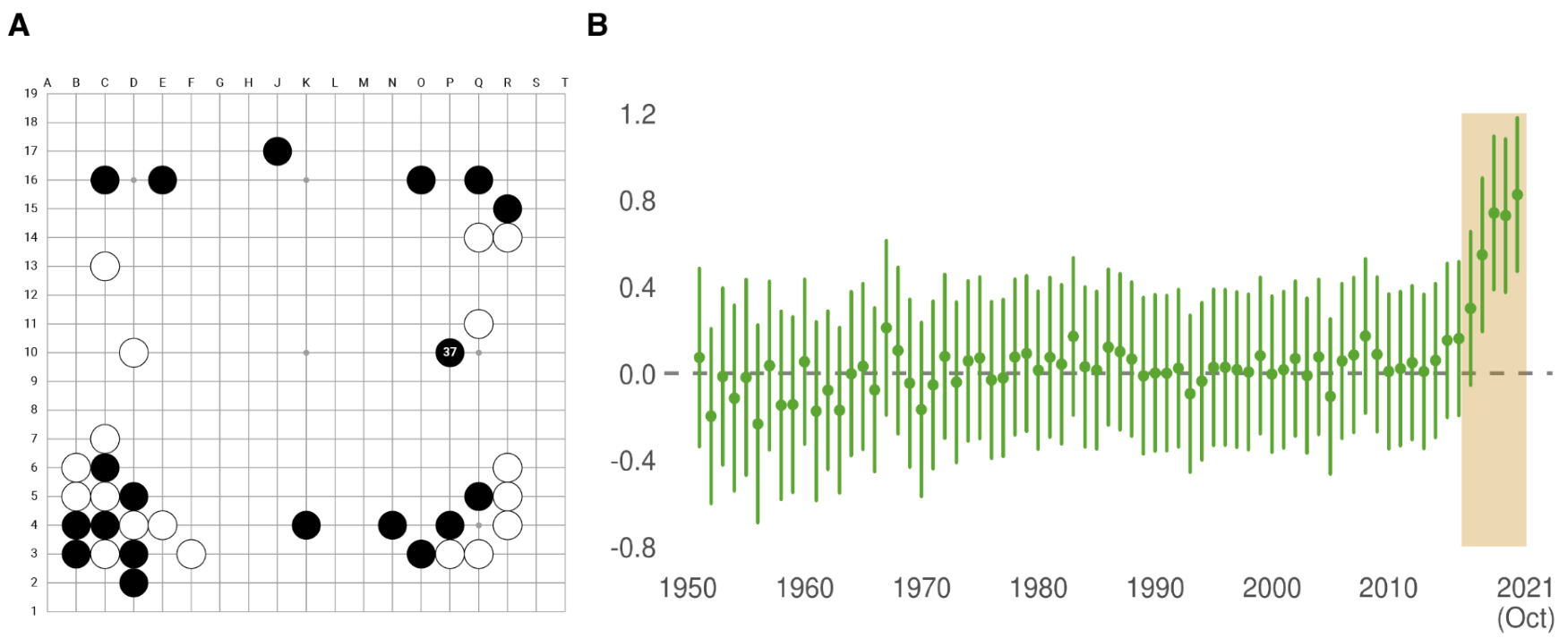

In 2016, AlphaGo defeated Lee Sedol, the world champion Go player, with a series of four victories over five games. Remarkably, AlphaGo managed to surprise Sedol with distinctively nonhuman gameplay. In particular, move 37 in the second game was considered extremely unconventional,

estimated by AlphaGo itself to have a 1 in 10,000 chance of being made by a human

The innovations generated by AlphaGo and AlphaGo Zero soon entered human culture, as shown by research comparing human gameplay before and after the algorithms’ introduction

Language models transmit and revalue cultural knowledge

The release of ChatGPT, a widely accessible LLM, has revolutionized how we interact with machines, using it to learn, brainstorm, and refine ideas. Trained on extensive human text data, both historical and contemporary, LLMs act as models of human culture

As the capabilities and usages of LLMs continue to develop, the value of certain human skills will shift. Some skills may lose value quickly, especially in language-related and cognitively demanding occupations such as translation, copywriting, or proofreading

Cultural rewiring through recommender systems

The digital age is so data-rich that it has become increasingly hard for humans to navigate available information. In this abundance, recommender systems that manage and filter information have a silent but increasingly important role that is easily overlooked given how seamlessly these systems have integrated into our everyday digital lives. These systems select and prioritize items based on a variety of explicit and implicit features, including personal interests, control settings, past user behavior, and the behavior of similar users. While recommender systems do not add variation in cultural traits, they demonstrably impact the selective retention and transmission of cultural traits.

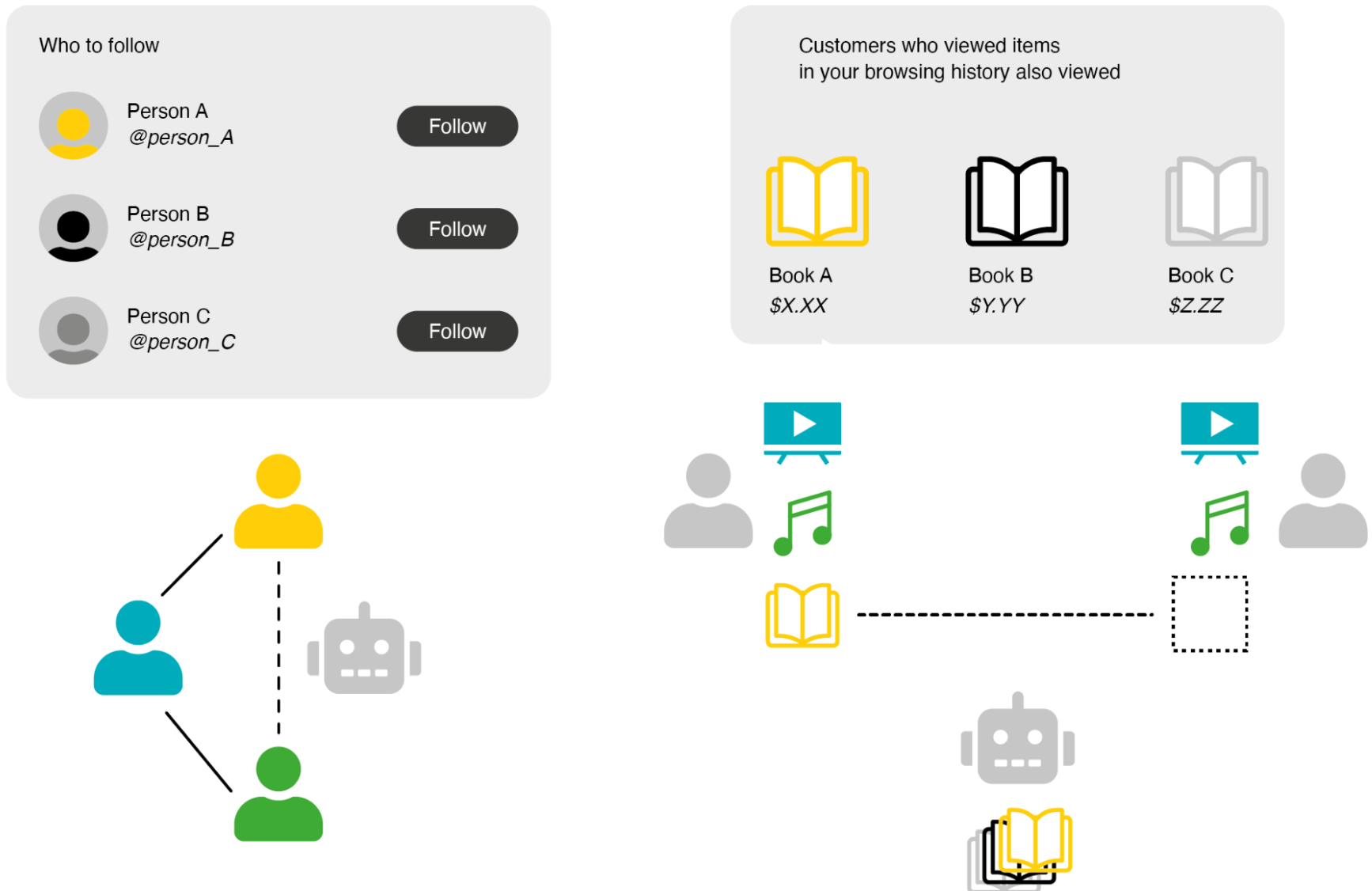

By promoting new social ties—such as suggesting who to follow on X (formerly Twitter), to date on Tinder, or to work with on LinkedIn—recommender systems alter the people to whom we are exposed to, ultimately changing the structure of our social networks and hence pathways of cultural transmission 12 (see Fig. 4.A). But recommender systems can also bypass network structures, exerting an even more direct impact on which cultural content or products we are exposed to. For example, e-commerce websites and streaming platforms deploy recommender systems to steer customers through the expansive array of available products based on content and collaborative filtering. Content filtering matches information about a customer’s consumption history with the attributes of all available products to make suggestions about related purchases: a customer who has recently purchased running shoes may receive suggestions for additional running equipment, matched to the shoes in price and design 13. Meanwhile, collaborative filtering 14 makes recommendations based on less obvious patterns of correlations in users’ profiles: if users A and B overlap in their previously consumed movies and music, the recommender system might suggest content of a completely different kind, for example, recommending to user A a book that user B liked (see Fig. 4.B). In sum, recommender systems influence cultural evolution by rewiring our social networks and modifying information flows such that they can substantially influence the dynamics of cultural markets 15.

A framework for machine-mediated cultural evolution

Building on these exemplary instances of how machine technologies may impact cultural evolution, we will now map out a systematic framework for studying the potential of machines to shape cultural evolutionary processes.

Culture has been defined as information capable of affecting individuals’ behaviors that is acquired from other individuals via social transmission

Variation refers to the existence of different cultural traits within a population. Transmission involves the spread of cultural information from one individual to another through social learning mechanisms, including observation and teaching. During this transmission, information losses often occur, affecting the preservation of cultural traits. Selection occurs when certain cultural traits are more likely to be adopted by individuals due to factors such as their usefulness, popularity, or compatibility with existing cultural practices. Crucially, the prevalence of specific traits over time can be influenced by both their selection and variations in transmission fidelity, contingent on the traits’ characteristics. We anticipate that machine technologies will affect each of these three properties (see Fig. 4), which are likely to have transformative impacts on cultural evolution.

Table 1. Tentative conjectures about ways in which machines might shape the processes of cultural evolution.

| Machine Capability | Possible Impact on Culture | |

|---|---|---|

| Variation | Learning at unprecedented scale and speed (e.g., reinforcement learning) | Emergence of solutions culturally alien to humans |

| Superhuman model complexity | Generation of solutions inconceivable by collective human intelligence and human cultural evolution | |

| Incomparably broad and deep knowledge base | Creation of novel recombinations beyond the human horizon | |

| (Semi-)autonomous creativity (e.g., image generation models) | Facilitation or crowding out human participation in creative exploration | |

| Transmission | Exposing and/or preserving documented cultural knowledge (e.g., LLM chatbots) | Enhanced retrieval and increased transmission fidelity of documented cultural knowledge |

| Reproduction of human biases | Amplification or mitigation of existing biases; potential for increased cultural erosion | |

| Accelerated processing of empirical evidence | Facilitation of transmission of less compressed knowledge | |

| Leveraging the unique cognitive capabilities of both humans and machines | Expanding the collective capacity to maintain diverse cultural artifacts | |

| Selection | Recommendation of social ties (e.g., link recommendation algorithm) | Shaping social networks, potentially inhibiting or enhancing serendipitous encounters |

| Curating content (e.g., ranking algorithms, collaborative filtering) | Indirectly shaping social networks via content exposure | |

| Shaping incentives for human content creators (e.g., clickbait) | ||

| Adapting to human feedback (e.g., RLHF) | Alignment of machines to human goals, potentially leading to unintended consequences (e.g., spread of highly believable myths) | |

| Machine learning from machine-generated content | Selection of machine-generated content as main driver of cultural evolution | |

| Adaptability of AI models to market/societal demands | Proliferation of appealing apps with varying alignment to human welfare | |

| Competing with humans in cultural production (e.g., poetry) | Specialization of humans and machines in distinct niches |

Variation

Variation refers to the presence of diverse cultural traits within a population. Humans and machines contribute to this existing cultural variation through both random and guided exploration, as well as the recombination of existing cultural traits. However, machines, leveraging their unique capacities, can produce traits distinct from those produced by humans, thus potentially steering culture toward new paths.

Machines have the ability to learn individually at an unparalleled scale, enabling the discovery of novel cultural traits through extensive exploration. Thorough exploration is not unique to machines. Thomas Edison, for example, famously tested over 6,000 materials to find the most suitable filament for his incandescent light bulb

Machines and humans employ guiding models—policies—that aid in efficiently exploring complex solution spaces, thereby enabling the discovery of solutions that would be unobtainable through random exploration alone. For instance, Mesoamerican skywatchers, via an early example of human astronomic modeling, developed a policy for optimal seed planting times, maximizing agricultural productivity

Many contemporary algorithms, such as LLMs, have been trained on a cultural repertoire of unprecedented scale

language barriers, or scientific disciplines—intelligent machines can produce novel recombinations that may be beyond humanity’s reach. Irrespective of whether LLMs truly exhibit understanding

But machines may also augment human exploration. Conditional generative AI, like DALL-E, allows users to steer the generative process through text prompts, enabling experimentation with diverse concepts and visualizations without the need for advanced artistic skills

Transmission

The transmission of cultural information occurs via social learning, defined as learning that is influenced by the observation of another individual and/or its products

Intelligent machines will increasingly be involved in the preservation and transmission of cultural information. Cultural evolution has supplied humans with increasingly efficient tools to preserve cultural information. The invention of writing, for instance, allowed humans to mitigate cultural loss by recording information in a more permanent way. Theoretical and empirical studies of cultural evolution have shown that the stability of cultural information strongly depends on both the size of the population that shares information

instance) 16. Besides serving as a persistent medium of cultural storage analogous to a book, machines can learn to seek and transmit information

As machines store and transmit cultural information, they may reproduce and transmit biases inherent to human culture, for example through biased training datasets; but they also offer potential to mitigate those. Machine learning models reproduce various content biases inherent to their training data, including gender bias, racial or ethnic bias, bias for negative and threat-related information, and socioeconomic bias

Cultural transmission can be impacted not only by the replication of biases but also by disparities between human and algorithmic biases. Examples of biases found in humans are confirmation bias 25, availability bias 26, and a bias towards specific symmetries 27. Despite their reputation for undermining optimal decision-making, biases can actually reflect optimal decisions within a particular socio-environmental context under cognitive constraints such as memory, computation, or experience

Machines’ increased computational capacity might additionally affect the feasibility of accumulating uncompressed information via a “big data” approach. The compressibility of information is the

inverse of its algorithmic complexity: Compressing information is achieved by creating a rule that is shorter than a complete list of the data itself

Selection

Culture evolves in part through the selective retention of cultural traits. In the context of machine culture, selection can occur at a level where machines select what and from whom humans learn, at a level where humans select machine behavior, and at a level where there is selection between humans and machines.

When it comes to what humans learn, social learning strategies shape what, when, and whom we copy. These strategies can be broadly categorized into content- and context-based strategies

For instance, content-based filtering algorithms aim to maximize the similarity between items a user previously showed interest in and unobserved items. Emulating context-based strategies in selection, ranking algorithms typically sort items according to a relevance score, which is based on the items’ popularity

Another dimension along which algorithms may influence the selective retention of cultural traits pertains to social networks, which form the backbone of information exchange. In this context, social

ties are rewired as users follow algorithmic recommendations based on user attributes, such as popularity, or similarities in user preferences both in personal (X: “who to follow”) and professional domains (LinkedIn “People you might know”). Link recommendation algorithms have the potential to shape the overall evolution of social networks 32 33 34. X’s “who to follow” recommendation was observed to disproportionately benefit those users who were already the most popular, fueling “the rich get richer” dynamics 34. A growing body of research documents a complex but persistent and critical relationship between social networks’ structure and collectives’ ability to collaborate, coordinate, and solve problems 35 36 37 that ultimately shape cultural repertoires 38 39.

While machines have most commonly relied on exploiting explicit user preferences and historical behavior (e.g., ratings, engagement), there has been a growing interest in considering users’ internal states to improve algorithmic recommendations. For instance, users might be uncertain about their preferences — especially in domains in which they lack expertise. Bayesian approaches can model users’ uncertainty and be used to update algorithmic recommendations as the user interacts with the system 40. As another example, recommender systems might account for the cognitive cost of exploring items or learning about them by prioritizing items that are easier for users to evaluate or learn 41. Affective recommender systems 42 use techniques such as natural language processing to make inferences about users’ emotional states, and even combine them with other context information such as the recommendation domain (e.g., music or movies) 43 44 45. While recommender systems have so far been mostly shaping user preferences implicitly (e.g., by optimizing the position or ranking of content), LLMs may accelerate developments where users are increasingly persuaded explicitly through interactive argumentation.

Downstream consequences of selection by machines may often be specific to particular environments, algorithmic models, and feedback loops. However, one feature generalizing across various contexts is that algorithms–by design of underlying business models–are often geared towards maximizing user engagement for profit 46. In social networks, this may be achieved by promoting content congruent with users’ past engagement or ingroup attitudes 47, or content that humans inherently attend to, such as emotionally and morally charged content 48 49. One example for this is information that relates to threat or elicits disgust, as shown in transmission chain experiments inspired from cultural evolutionary theory 50. The algorithmic amplification of such content may then feed back into human social learning, for instance inflating beliefs about the normative value of expressing moral outrage 51 52, increasing out-group animosity 53, or by creating echo chambers and filter bubbles 54 55 56. It is important to note that user engagement is a signal of value to both users and platforms deploying algorithms, connecting them in complex feedback loops 57 58: machines such as recommender systems react to user engagement, selecting types of content people engage with. Users also react to recommender systems, both directly in terms of clicking, viewing, purchasing but also in terms of what they produce, as content creators anticipate what will receive the widest distribution. These feedback loops, but also deliberate product design choices along with policy approaches, provide potent leverage points for aligning recommender systems with human values 59. A promising approach to addressing this challenge could involve considering potential misalignments between users’ engagement and their own preferences and identifying the boundary conditions determining when maximizing user engagement enhances user welfare and when it produces the opposite effect 60. Algorithmic systems more generally offer powerful ways to bridge social divides, for instance by designing selection policies that steer users’ attention to content that increases mutual understanding and trust 61 62, or by identifying and promoting links in social networks that can effectively mitigate

polarizing dynamics

Human preferences, in turn, can directly shape machine behavior, in particular through reinforcement learning with human feedback

Humans select machine behavior also through direct creation and curation of training data for machine learning, and through more indirect interactions with machine-generated outputs. Despite efforts to watermark machine-created content

Yet another pathway for human selection on machine behavior pertains to general machine properties. For instance, humans may choose between different intelligent machines available on the market based on factors such as preferences, cost, usefulness, harmfulness, and alignment with regulatory requirements. As such, the language model LLaMA recently gained attention for its relatively smaller size, making it more cost-effective to use

Selection is also bound to occur at a level where machines or humans are favored over one another. For instance, the ability of machines to process vast amounts of information both quickly and accurately affords them a competitive edge in numerous cognitive tasks, such as strategic gameplay

and information retrieval. Relatedly, due to their cost-effectiveness and efficiency, intelligent machines may grow into the main workforce across various professional domains

Grand challenges and open questions

We now suggest a broad research agenda for computational and behavioral scientists interested in the phenomenon of Machine Culture.

Measurement

One major open challenge is to quantify how much of human cultural dynamics can be attributable to algorithmic processes. For instance, it is difficult to completely disentangle the effects of ranking and recommendation algorithms on culture from alternative processes of human social learning, such as communication technology, institutions, and social practices. Since the inception of human culture, derived tools have had an important role in shaping cultural processes, making the establishment of a baseline a challenging question in itself. Getting good estimates is a precondition to optimizing for the usefulness of these algorithms while avoiding undesirable cultural impact such as polarizations

A complementary question is how to quantify the influence of machine-generated artifacts – e.g., artwork, literature, music – on human cultural production in these areas. As generative AI becomes more commonplace, distinguishing intrinsic human culture from machine-generated culture or machine-influenced culture becomes even more challenging, especially as watermarking techniques may not be universally adopted. Despite popular media claims about machine-generated art developing its own unique style

Another measurement concern relates to the quantification of the cultural regularities encoded in LLMs and other AI models. Even prior to the rise of LLMs, social media platforms like Facebook

already possessed fairly detailed quantitative models of cultural regularities and differences

Societal Decision-making

We currently observe a rapid increase in the diversity of AI models, including LLMs, accelerated by the open-source software movement. However, market forces, such as regulation and market power, may result in a world dominated by a small number of monolithic models. This raises the possibility of reduction in cultural diversity, as major social, political, and economic forces try to shape global machine culture to match their preferences. This process may be amplified by feedback loops, in which LLMs train on an ongoing basis from synthetic data, or from human data that contains much machine-generated text. Preliminary evidence points to the possibility of model collapse, with the models losing diversity and converging to a state with low variance

Conversely, we face a potential ‘Tower of Babel’ scenario. As AI models become increasingly personalized, conforming to and reinforcing our individual worldviews, they risk engendering an unprecedented fragmentation of our shared perception of the world. In the biblical story

Against this background, a key research agenda is to quantify the degree to which a given AI model, or ecosystem of models, exhibits uniformity or diversity. It is also imperative to understand what constitutes a ‘healthy’ level of diversity, that retains local sovereignty while also fostering collective human flourishing.

Ensuring that AI models, such as LLMs, reflect the beliefs and values of a given community requires mechanisms for societal decision-making about what knowledge goes into the models

A related challenge is how to ensure long-term monitoring of Machine Culture. Similar to the notion of human-in-the-loop control of intelligent machines, we can aspire towards society-in-the-loop control of the complex phenomena of Machine Culture

Suppose a community knows which cultural beliefs and values it wants to encode in an AI model. The next question is how to ensure that these are indeed present in the model. One approach is to carefully curate the dataset on which the model is trained

well understood. There is a need for methods to check which cultural beliefs and values have been learned, inferred, or encoded in a given AI model and the degree to which it aligns with a target culture.

Long-term Dynamics & Optimization

In all likelihood, the future of culture will be hybrid, with cultural artifacts—scientific theories, industrial processes, art, literature—being created by a combination of human and machine intelligence. This raises a suite of open questions relating to the long-term dynamics of human-machine co-evolution. These dynamics may lead to diverse phenomena ranging from different forms of human-machine mutualism, to Red Queen effects (an evolutionary arms race between humans and machines) that characterize the co-evolution of both forms of intelligence

A related question is how to optimize the aforementioned dynamics, in order to combine human and machine intelligence in an ideal or safe manner

Conclusion

We asked GPT-4 to first write a compressed version of this Perspective and then to provide a conclusion. It suggested the following (minimal editing to align nomenclature has been applied). The symbiosis of human and machine intelligence is forging a new epoch of cultural evolution. This Perspective highlights the transformative role of intelligent machines in reshaping creativity, redefining skill value, and altering human interactions. Central to the discourse is the triad of cultural evolution: variation, transmission, and selection, and how machines interface with each. The interaction is multifaceted, from generative AI birthing novel cultural artifacts to recommendation algorithms influencing individual perspectives. However, the crux remains in understanding and navigating the challenges and opportunities that arise from this hybridization of culture. As the imprints of intelligent machines grow deeper, it’s imperative to ensure a harmonious co-creation of culture where both human and machine augment, rather than eclipse, each other. This not only broadens the horizons of cultural exploration but also fortifies the tapestry of human experience in the age of intelligent machines.

Author contributions

Acknowledgments

Competing interests

The authors declare no competing interests.

References

[references omitted]

data. Am. Sociol. Rev. 85, 477–506 (2020). https://doi.org/10.1177/0003122420921538.

[references omitted]

[references omitted]

[references omitted]

[references omitted]

[references omitted]

Preprint at arXiv (2019).

[references omitted]

[references omitted]

[references omitted]

[references omitted]

[references omitted]

[references omitted]

[references omitted]

sentiment analysis in collaborative recommender systems. PLoS ONE 16, e0248695 (2021). https://doi.org/10.1371/journal.pone.0248695.

[references omitted]

arXiv (2023).

[references omitted]

[references omitted]

arXiv (2023).

[references omitted]

[references omitted]

[references omitted]

[references omitted]

Footnotes

-

14 ↩ -

15,16 ↩ -

17 ↩

-

18 ↩

-

19 ↩

-

[reference omitted] ↩

-

[reference omitted] ↩

-

[reference omitted] ↩

-

[reference omitted] ↩

-

[reference omitted] ↩

-

[reference omitted] ↩

-

[reference omitted] ↩

-

[reference omitted] ↩

-

[references omitted] ↩

-

[reference omitted] ↩

-

[reference omitted] ↩

-

[reference omitted] ↩

-

[reference omitted] ↩

-

[reference omitted] ↩

-

[reference omitted] ↩

-

[reference omitted] ↩

-

[reference omitted] ↩

-

[reference omitted] ↩

-

[reference omitted] ↩

-

[reference omitted] ↩

-

[reference omitted] ↩

-

[reference omitted] ↩

-

[reference omitted] ↩

-

[references omitted] ↩

-

[references omitted] ↩

-

[references omitted] ↩

-

[references omitted] ↩

-

[references omitted] ↩

-

[references omitted] ↩

-

[references omitted] ↩

-

[references omitted] ↩

-

[references omitted] ↩

-

[references omitted] ↩

-

[references omitted] ↩

-

[references omitted] ↩

-

[references omitted] ↩

-

[references omitted] ↩

-

[references omitted] ↩

-

[references omitted] ↩

-

[references omitted] ↩

-

[references omitted] ↩

-

[references omitted] ↩

-

[references omitted] ↩

-

[references omitted] ↩

-

[references omitted] ↩

-

[references omitted] ↩

-

[references omitted] ↩

-

[references omitted] ↩

-

[references omitted] ↩

-

[references omitted] ↩

-

[references omitted] ↩

-

[references omitted] ↩

-

[references omitted] ↩