Because this book has followed a rough chronology, beginning four billion years ago and working its way through the historical developments that led to computing, cybernetics, neuroscience, and AI, many of the figures we’ve encountered so far are historical. Hindsight helps us judge where they got things right or wrong, though it also risks oversimplification. Some figures are canonized, while others are unfairly minimized or erased. Some are tarred because they held beliefs that were mainstream in their day, but no longer are, or that were bold, but were later proven wrong, sometimes laughably (or horrifyingly) so.

If we judge prolific thinkers, living or dead, by their worst recorded ideas, nobody comes out looking good. Except possibly Alan Turing. He might have been right about everything.1 Everyone else is a mixed bag, and that is the best any of us can reasonably aspire to—if we are remembered at all.

Von Neumann’s roles in the early development of the computer and in the early stages of the nuclear arms race were inseparable

For example, I’ve emphasized John von Neumann’s brilliant contributions to computer science, alongside Turing’s. But in 1950, with the Soviet Union still far behind the US in building a nuclear arsenal, von Neumann, ever the game theorist, advocated a pre-emptive nuclear strike: “If you say why not bomb them tomorrow, I say why not today? If you say today at five o’clock, I say why not one o’clock?” 2 Had von Neumann been empowered to pursue his policy, he would be remembered as a monster and mass-murderer.

Major Kong riding down the bomb in Dr. Strangelove, Kubrick 1964

Or consider Lynn Margulis, the brilliant, rebellious biologist who did more than anyone else in the twentieth century to advance the symbiogenetic view of evolution and the Gaia hypothesis. Margulis was also an AIDS denier, claiming as late as 2011 (the year she died) that the symptoms were caused by latent syphilis infections in “high-risk” populations.3 She was a stubborn proponent of the conspiracy theory holding that the 9/11 terrorist attacks were an inside job, too.4 Her best and worst intellectual legacies were both products of the same imaginative mind and contrarian sensibility. So … Turing aside, this book has no perfect heroes.

Neither does it have villains. GOFAI pioneers like Minsky and Papert may have been wrong to dismiss perceptrons, but they made major contributions to computer science. I’ve emphasized the incorrectness of Leibniz’s take on computable truth, and the absurdity of Descartes’s dualist concept of a spiritual homunculus blowing psychic pneuma into the pineal gland. But Descartes and Leibniz were both brilliant thinkers in their day, and their errors were largely the uncorrected residue of ideas they inherited from their predecessors.

Now, as we near the end of the book, I need to engage critically with the ideas of the living. Given the contentious intellectual climate today, it feels important to frame the terms of my engagement. I will argue against positions held by good friends of mine, like Melanie Mitchell, Ted Chiang, Joanna Bryson, and Christof Koch, and take issue with arguments made in recent books by Nick Bostrom, Max Tegmark, Ray Kurzweil, and Yuval Noah Harari, among others.

But before focusing on points of difference, I want to emphasize that these are all intelligent people. I have learned a great deal by reading their work and engaging them in conversation, and agree with many of the points they raise. Our divergent conclusions often boil down to surprisingly subtle nuances in perspective.

If this book focused on the points of broad agreement, it would be a much longer one. But that wouldn’t be a book worth taking the time to write, or to read. Per Mercier and Sperber’s The Enigma of Reason, one of the main functions of language is to argue, and, through argument, to help us collectively inch forward.

Like so much else, what is adversarial is also collaborative. Mitchell, Chiang, Bryson, Koch, Bostrom, Tegmark, Kurzweil, Harari, and many others are, like me, sticking their necks out to engage deeply in difficult questions with real stakes. We all care about the future and share many basic values. We’re all on the same team, despite the fractious way the media tends to portray contemporary debates about AI, or anything else of consequence.

In years past, when Silicon Valley ran on a giddy optimism verging on the utopian, I often came across as the grumpy pessimist in the room. Raising historical perspectives and arguing against techno-determinism felt to me like an important corrective amid a culture that sometimes seemed convinced we had reached the “end of history” 5 and were on a glide path to universal liberal democracy, abundance for all, and digital immortality.

Sometime in the mid-2010s, the mood started to sour. It was probably due to a combination of factors: rising economic inequality and the erosion of the middle class; the resurgence of populist politics; the divisiveness of social media; a growing awareness of the double-edged nature of technology; increasing distrust of corporations in general, and tech companies in particular.

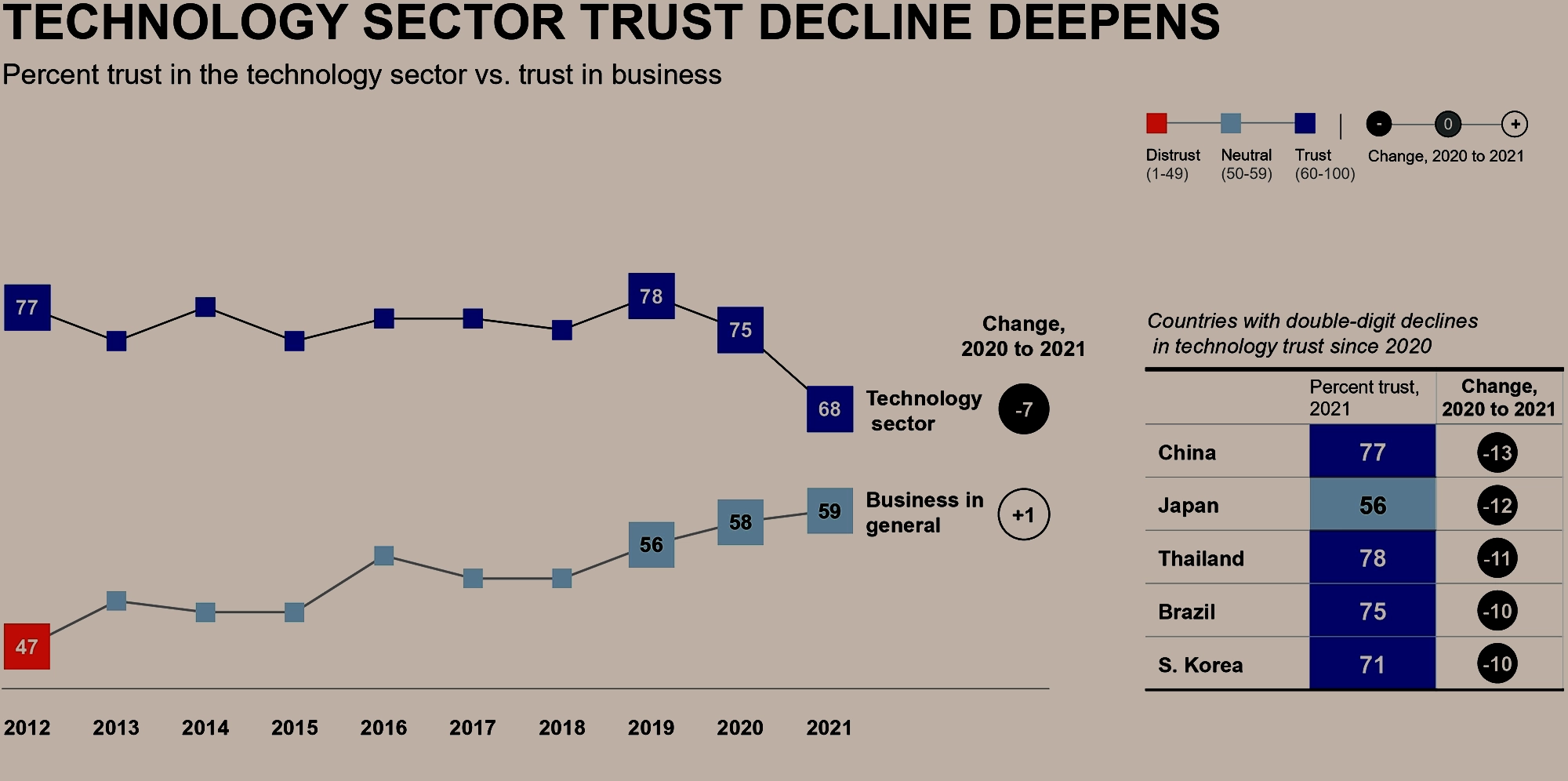

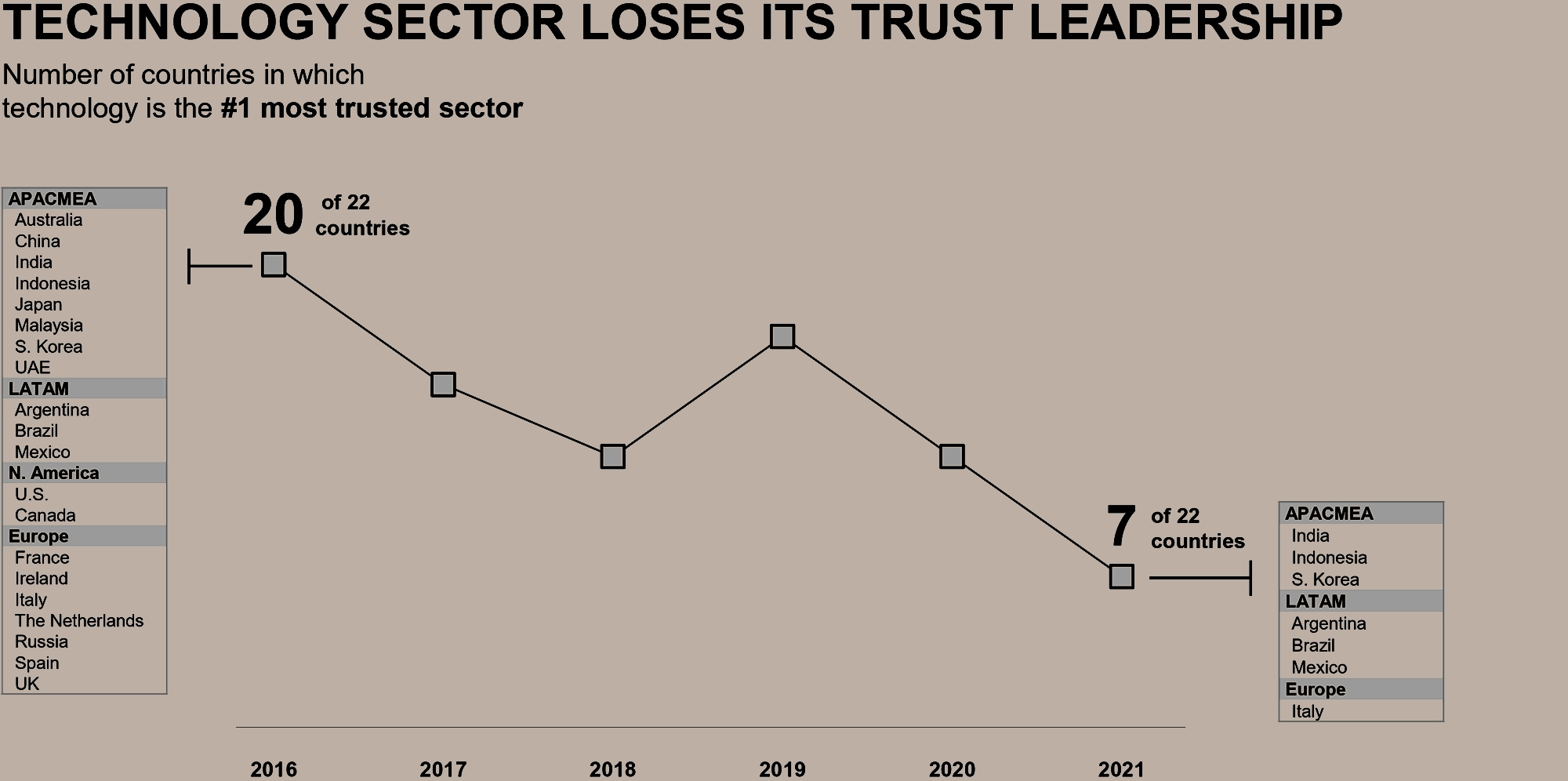

Longitudinal survey data shows a systematic decline in trust in the tech sector throughout the 2010s; Edelman 2021.

Longitudinal survey data shows a systematic decline in trust in the tech sector throughout the 2010s; Edelman 2021.

Around the same time, progress in AI began to accelerate dramatically, and so it got caught up in this larger zeitgeist. Some Silicon Valley insiders who had until recently been preaching the gospel of a techno-paradise just around the corner reversed themselves, becoming obsessed with visions of an imminent AI apocalypse. The old gospel of plenty had been predicated on the idea that we were living in unprecedented times. The new talk of apocalypse is also predicated on the idea that we’re living in unprecedented times. So now, my attempts at historical perspective can make me sound like the optimist in the room!

My thinking has certainly evolved over the past decade, but it has remained consistent in one respect: I have always believed that debate and discussion matter. I am optimistic. But the arrow of time, wherein simpler entities tend to combine into more complex ones, does not imply techno-determinism, nor does it give us license to be passive.

Aldous Huxley in 1961 opining on technological determinism: the idea that technology and “applied sciences” now follow their own course, carrying humanity along for the ride, as opposed to humanity being “in control” (as he implies used to be the case). Since modern humanity is a profound, collective symbiosis including technology as well as biology, neither the statement “technology is in control of humanity” nor “humanity is in control of technology” is coherent. Neither is it obviously the case that individual or collective human agency has been curtailed by this symbiosis; we are less free in some ways and freer in others.

Where there is life, there is choice. Societies have free will for the same reasons people do, and our prospects remain hopeful only and precisely to the degree that we’re able to be intelligent: to model, predict, explore, and decide between counterfactual futures.

Symbioses can occur in many ways, and, accordingly, life on Earth can evolve in many ways. Some of these paths have greater dynamic stability than others, and some are more appetizing than others. In some, many of our longstanding wishes will come true. Others could indeed involve the partial or wholesale extinction of existing entities, including humans. That risk has been on the table ever since von Neumann said, “why not one o’clock?”