year:

paper: brainca-brain-inspired-neural-cellular-automata-and-applications-to-morphogenesis-and-motor-control

website:

code:

connections: NCA, Benedikt Hartl, levin

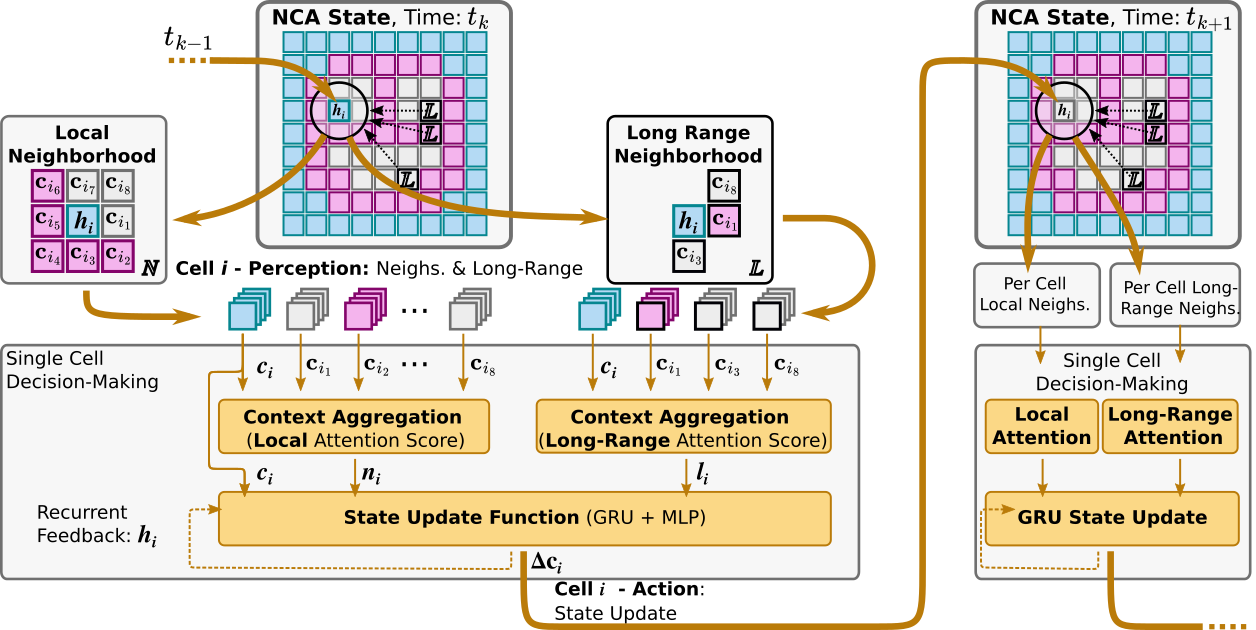

Notation

… cell state of cell at time

… optional conditioning input (positional encoding, prev action, …)

… extended state

… local neighborhood indices

… long-range neighborhood indices

… raw local attention score

… raw long-range attention score

… normalized local attention weight

… normalized long-range attention weight

… local attention MLP,

… long-range attention MLP,

… GRU hidden state (values aggregated by attention)

… aggregated local signal

… aggregated long-range signal

… interaction vector

… composition operator for

… message MLP output, GRU input

… message MLP

… system-level feedback (e.g. one-hot prev action)

… external observation

… GRU cell

… refinement residual

… refinement MLP

… per-cell action logits (lunar lander)

… per-cell fire probability

… cells in action region

… region-level action logit

… action policy

… number of cells

… cell state dim

… extended state dim

… attention score dim

… message dim

… final timestep

Limitations / obvious next steps

- GRU, not QKV-attention-memory

- With graph attn there’s also no any2any between the msgs per node (not sure if it matters - prlly not if u factor efficiency and diffusion over time)

- Hidden state is public / the same as the public message, not compressed / …

- Long-range connectivity is fixed (Zipf’s law)

- All cells get (the same) observations…

- Arrangement affects performance and learning speed - let the cells arrange themselves

- Growth instead of fixed structure path: weights are the genome, evolved slowly over many generations of growth, growth happening during rollouts (i.e. genome only meta learns). This prlly (definitely) needs some pre-training to have any chance of working. Perhaps… pre-pre-training the NCA on CAs…

Unclarities

- Why does the morpho experiment use additive fusion for the interaction vector while lunar lander concatenates ? Not motivated in the paper.

- why dont they do all to all comms in the attn agg? efficiency? unnecessary? (same info is spread out through timesteps) - maybe full attn only worth it for internal states

- Lunar lander readout: paper prose says ” output is split into two heads” but the equations write both heads as reading from directly. : , but , suggests the prose is right and the equations are wrong. And it also makes more sense that way…

BraiNCA

Init: (morpho) or (lunar). broadcast to all cells.

Repeat for steps (morpho: total; lunar: per env step), cells ():

Approach to actions, lunar lander

- divided into regions

- softmax over logits → fire probability

- majority vote, confidence weighted

- Within each region, compute a weighted average of fire logits , weighted by the fire probability/confidence :

- softmax over to get actiono dist to sample from

Detailed

Extended state bundles all non-cell-state inputs. What it contains is task-dependent: Morphogenesis: nothing , … 3 visible channels (cell type logits) + 6 hidden channels. Lunar lander: where is the previous fire/noop decision, and is normalized grid position 1 (static). … extended state. . The 1 is the previous fire/noop decision, the 2 is the positional encoding. … latent dim (no "visible" channels, the readout is via a separate action head).

Neighbor scoring ( graph attention) : Linear(, 64) → GELU → Linear(64, ), applied to concatenated pair . Each cell scores each of its neighbors independently. Local and long-range use separate :

\begin{align*}

a_{ij}^t &= f_{\text{attn}}^{(\mathcal{N})}([\mathbf{s}i^t;\mathbf{s}j^t]), \quad \alpha{ij}^t = \text{softmax}j(a{ij}^t), \quad \mathbf{n}i^t = \sum{k\in\mathcal{N}i}\alpha{ik}^t,\mathbf{h}k^t \

b{ij}^t &= f{\text{attn}}^{(\mathcal{L})}([\mathbf{s}i^t;\mathbf{s}j^t]), \quad \beta{ij}^t = \text{softmax}j(b{ij}^t), \quad \mathbf{l}i^t = \sum{k’\in\mathcal{L}i}\beta{ik’}^t,\mathbf{h}{k’}^t

\end{align*}Scores use extended states $\mathbf{s}$, but values aggregated are GRU hidden states $\mathbf{h}$."Interaction vector" (cell input)

\mathbf{z}{i}^{t}=f{Z}(\mathbf{s}{i}^{t},\mathbf{n}{i}^{t},\mathbf{l}_{i}^{t})

Morphogenesis vanilla:

Morphogenesis long-range:

Lunar lander long-range:"Message MLP"

In morphogenesis, and are empty so it’s just .

In lunar lander, they’re simply concatted to , all cells receive the same obs.GRU update + readout

\begin{align*}

\mathbf{h}{i}^{t} &= f{\textnormal{GRU}}(\mathbf{m}{i}^{t},\mathbf{h}{i}^{t-1}) \

\tilde{\mathbf{h}}_i^t &= \mathbf{h}i^t + f{\textnormal{refine}}(\mathbf{h}_i^t)

\end{align*}$f_\text{refine}$ … the "refinement MLP" (two dense layers, $C$ units, GELU) is just a [[ResNet|resblock]]. Morphogenesis: $\mathbf{c}_i^{t+1} = \tilde{\mathbf{h}}_i^t$ Lunar lander: $\mathbf{c}_i^{t+1} = W_C \, \tilde{\mathbf{h}}_i^t$ (state projection) and $\boldsymbol{\ell}_i^t = f_\text{act}(\tilde{\mathbf{h}}_i^t)$ (action logits). $f_\text{act}$: two-layer MLP (GELU), outputs 2D (noop, fire).