year: 2021/02

paper: memory-transformer.pdf

website:

code: lucidrains x-trainsformers: num_memory_tokens

connections: memory token, transformer

TLDR

This paper frames memory tokens as a general-purpose storage extension for the Transformer. The authors identify that standard Transformers force local and global information to compete for space within the same element-wise representations. By prepending

[mem]tokens, they provide dedicated capacity for the model to store representations that do not correspond to any specific input symbol.

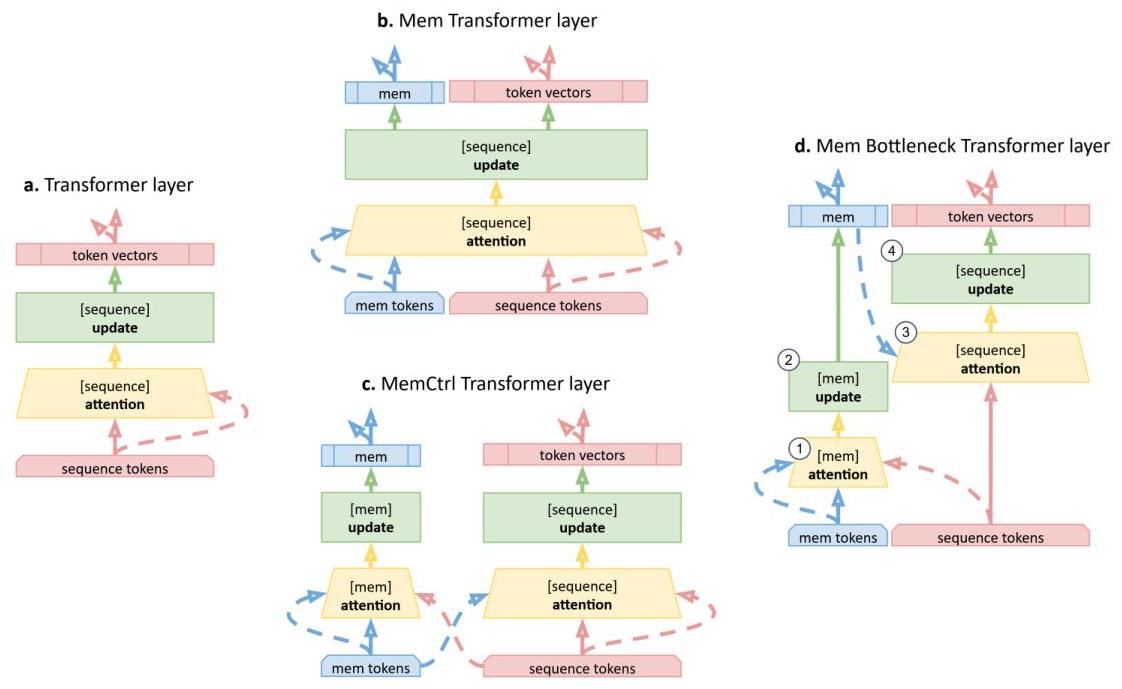

They also explore architectures like “MemBottleneck,” where attention between sequence tokens is removed entirely, forcing the model to funnel all global information exchange through the memory tokens.

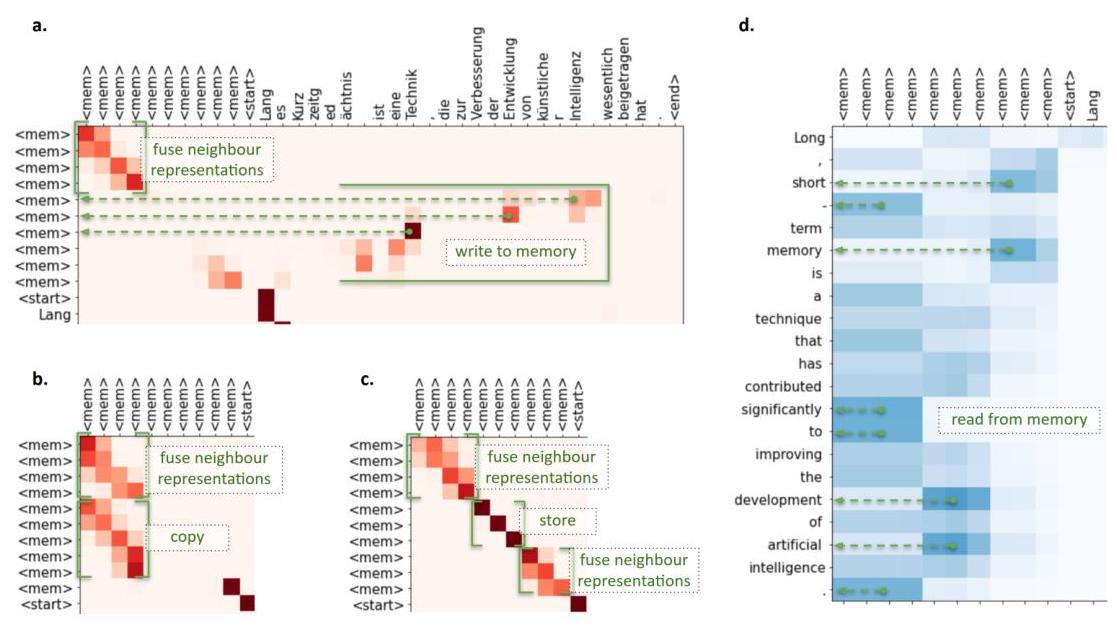

The primary benefit observed is improved performance on machine translation and language modeling, with attention maps confirming the model learns specific “read,” “write,” and “processing” operations within these tokens.

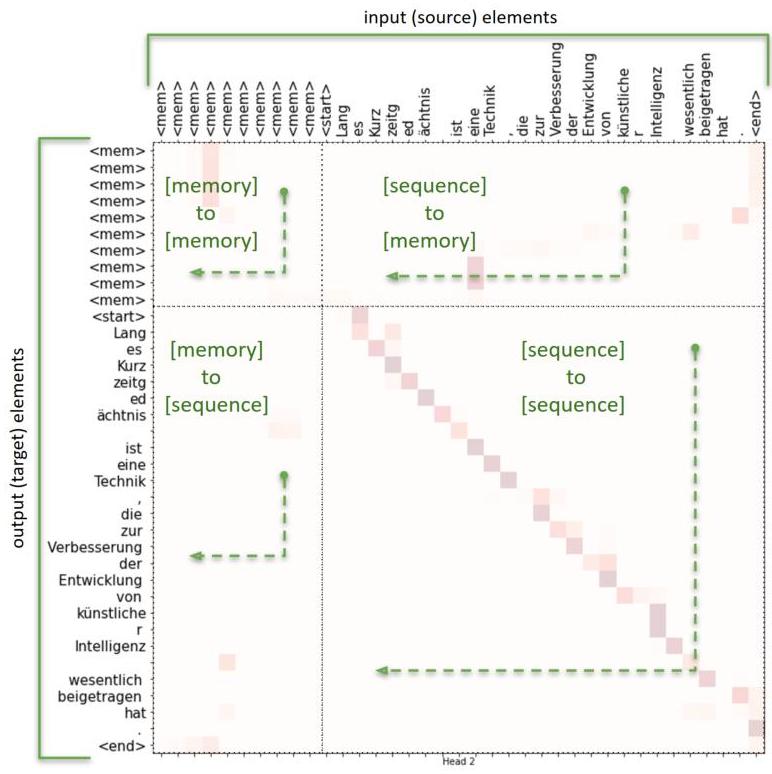

Figure: Memory modifications of Transformer architecture. (a) Transformer layer. For every element of a sequence (solid arrow), self-attention produces aggregate representation from all other elements (dashed arrow). Then this aggregate and the element representations are combined and updated with a fully-connected feed-forward network layer. (b) Memory Transformer (Mem-Transformer) prepends input sequence with dedicated

[mem]tokens. This extended sequence is processed with a standard Transformer layer without any distinction between[mem]and other elements of the input.

Sharp diagonal: Self-reinforcement

Blurred diagonal: Averaging neighbors

Vertical stripe: Multiple targets copying from same source

Horizontal strip: One target reading from multiple sources

Off-diagonal activity toward sequence: Writing sequence → memory

source (K, V)

[mem mem mem | seq seq seq seq]

┌─────────────┬─────────────────┐

mem │ mem→mem │ seq→mem │

target mem │ processing │ (WRITE) │

(Q) mem │ │ │

├─────────────┼─────────────────┤

seq │ mem→seq │ seq→seq │

seq │ (READ) │ (normal) │

└─────────────┴─────────────────┘

The “off-diagonal activity toward sequence” means: in the mem rows, you see attention peaks in the seq columns (the right side of those rows). That’s memory tokens querying sequence tokens and pulling their information in.

The asymmetry in naming is a bit confusing - “sequence → memory” means information flows from sequence to memory, but mechanically it’s memory doing the attending.