year: 2022

paper: recurrent-memory-transformer |

website: Yannik: Scaling Transformer to 1M tokens and beyond with RMT (Paper Explained)

code:

connections: memory token, long-context, transformer, RNN

The idea is to achieve infinite context length by making memory tokens recurrent:

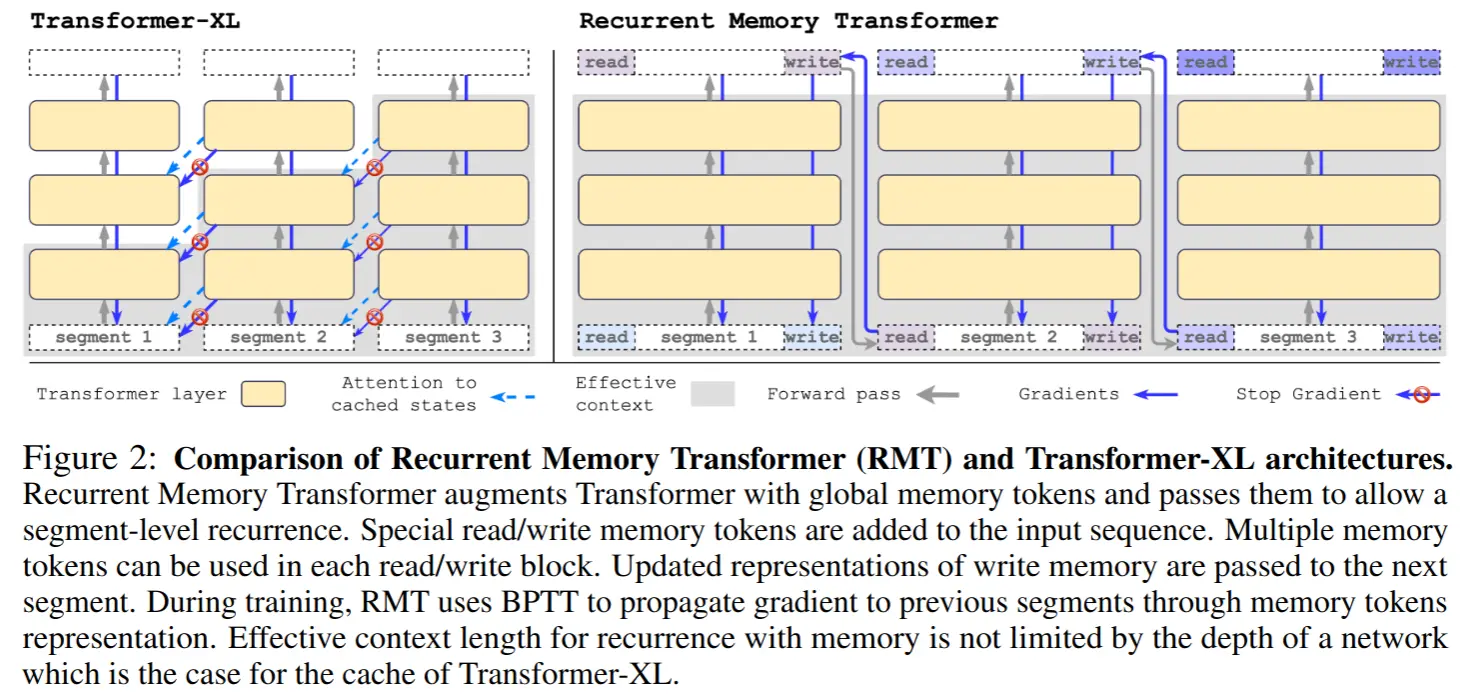

Gradients flow through the recurrence during training (BPTT across segments), memory participates in full attention, and gets transformed; all in contrast to simply cached KVs as per TrXL. 1

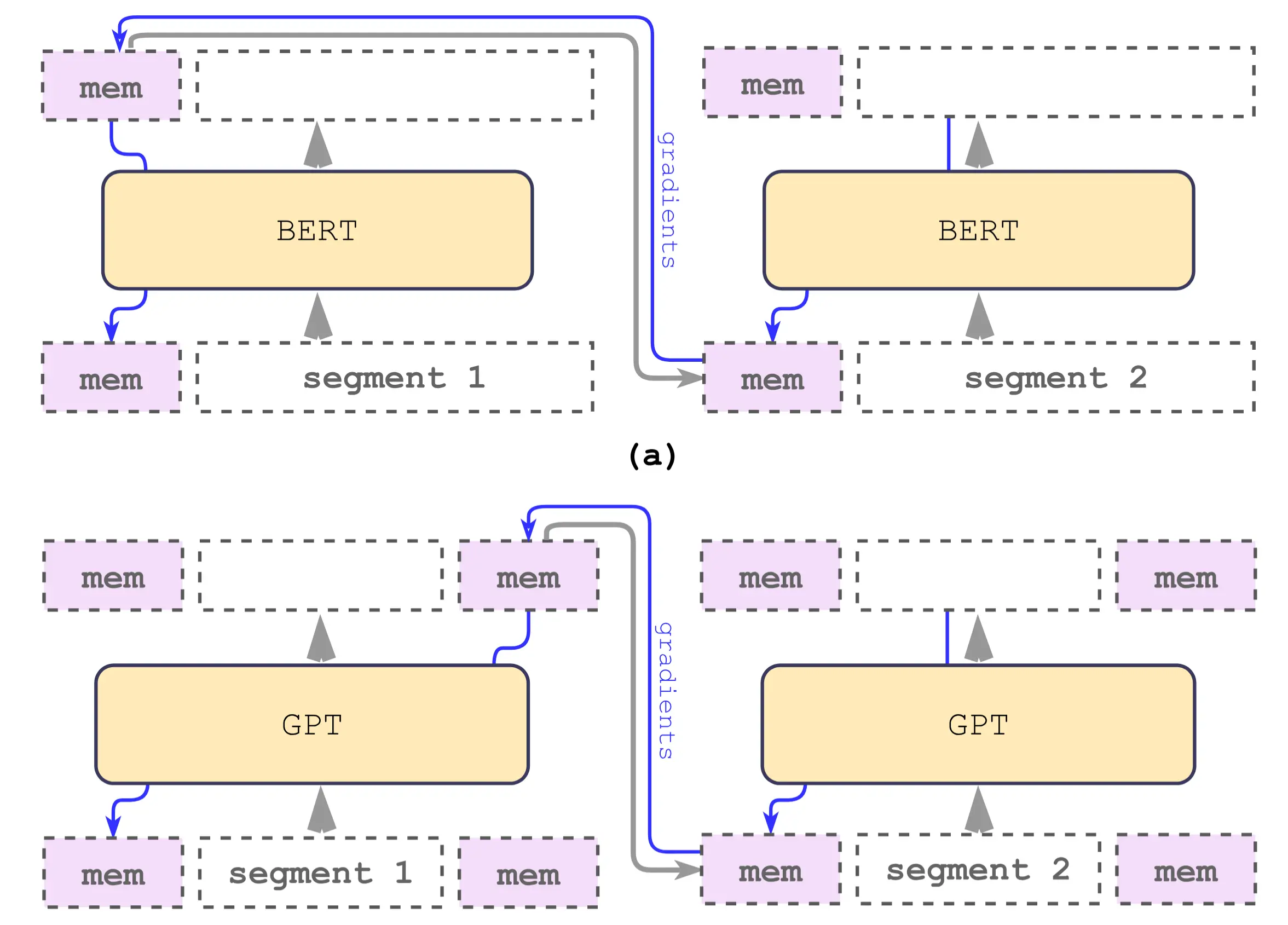

For the encoder variant, RMT simply places memory tokens only at the beginning of each segment, and the next segment’s memory is taken from those tokens after the Transformer.

For decoder-only models, RMT places the same memory tokens at both ends of each segment:

Decoder-only RMT explanation . For each segment , those same vectors are inserted twice into the input sequence: once at the beginning (this block becomes the “read memory”) and once at the end (this block becomes the “write memory”):

There is one set of memory vectors per segment

and after the Transformer you split the output into

Then you define the memory for the next segment as

Only at the very first segment is initialized from some fixed trainable vectors.

The scaling paper for RMT does technically run on 2 million tokens, but they use synthetic “needle-in-a-haystack” tasks (memorize a fact, recall it 1M tokens later). While impressive for demonstrating mechanism, it’s very different from reasoning over a 1M token codebase, and heavily optimized for algorithmic generalization (Copy, Reverse, Associative Retrieval). They prove the mechanism works .

Train/Evaluation QA

What do they evaluate on?

In the 2304.11062 report they evaluate on (1) synthetic long-context QA-style “memorization” tasks built from bAbI facts plus lots of distracting natural-language text from QuALITY (three variants: Memorize, Detect & Memorize, Reasoning), and (2) long-range language modeling on arXiv documents from The Pile using GPT-2 as the backbone.

How many segments do they train / evaluate over?

For the synthetic tasks they train models on up to 7 segments (curriculum from 1 → 7 segments of length 512), and then evaluate generalization on tasks up to 4096 segments (≈2.0M tokens) in length.

What is the context length?

Base encoder input is 512 tokens per segment; in BERT experiments they actually use 499 “real” tokens plus 3 special tokens, reserving 10 positions for memory. With 4096 segments this yields an effective context of ≈2.0–2.05M tokens. For the GPT-2 language-modeling experiments they use segment sizes like 128 and 1024 tokens but again chain multiple segments with memory.

How many facts do they retrieve in their evaluation?

In the original synthetic tasks:

– Memorize: 1 fact;

– Detect & Memorize: 1 fact at a random position;

– Reasoning: 2 facts randomly placed in the text.In the BABILong/11M-haystack paper, each sample has 2–320 facts, and the QA tasks can require 1, 2, or 3 supporting facts depending on the task.

Do they only do needle-in-a-haystack eval or also RL stuff?

The original RMT paper and the 2304.11062 scaling report are about synthetic NiH-style memorization tasks and long-range language modeling; no RL experiments there.

Potentially interesting follow-up work?

Footnotes

-

So in that sense it seems definitely more of a bio-plausible mechanism / suited for RL; or like rather it encourages to learn what to store in memory more directly / separates it from the rest of the processing. But TrXL caching and RMT recurrence can also be used together. Having extended lossless memory + some learned compression of it seems like a good combo, but for reasoning agents (or literally just for practical purposes, similar to biological constraints), I would put more emphasis on learned memory. Titans has a mix of persistent frozen + lossy long-term + normal (attention) short term memory. ↩