year: 2019

paper: https://arxiv.org/pdf/1910.06764

website:

code:

connections: TrXL, transformers in RL

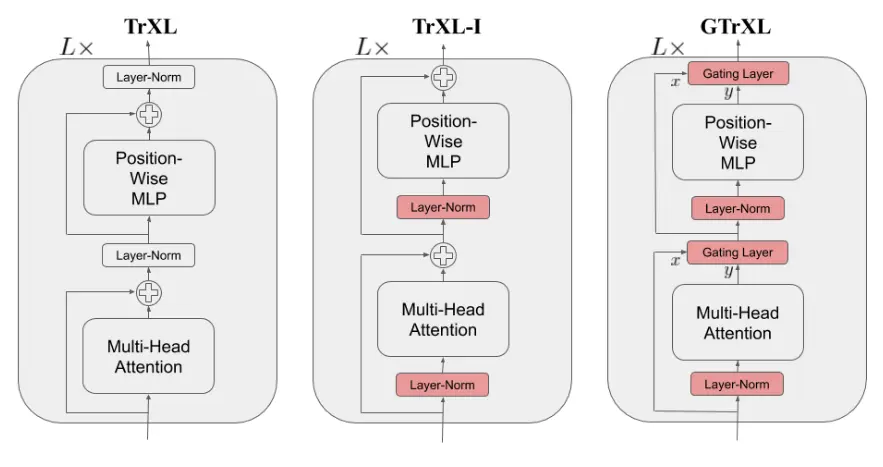

Adds gating mechanism to Transformer-XL + uses PreNorm, which stabilizes training in RL settings:

The gating weighs the contribution of the residual connection vs the layer output:

This is a GRU-style gating mechanism, with the difference that is initialized to , which biases to be small at the start of training (for random weight matrices paired with sigmoid), s.t. initially the layer behaves like the idenity function, , making it easier to optimize.

It performed best vs. sigmoid on input stream, sigmoid on output stream, sigmoid on both streams (highway connection), and sigmoid on output stream + tanh before elementwise multiplication type of gating.

Details of (unofficial) implementation on Crafter https://github.com/Reytuag/transformerXL_PPO_JA

Model Size: ~5M parameters Transformer-XL Configuration: - EMBED_SIZE: 256 - num_layers: 2 transformer layers - num_heads: 8 attention heads - qkv_features: 256 (query/key/value dimension) - hidden_layers: 256 (MLP hidden size for actor/critic heads) Sequence Length & Memory: - WINDOW_MEM: 128 (attends to last 128 steps) - WINDOW_GRAD: 64 (gradient flows through 64 steps during training) - NUM_STEPS: 128 (rollout length between updates) PPO Hyperparameters LR: 2e-4 (with linear annealing) UPDATE_EPOCHS: 4 NUM_MINIBATCHES: 8 GAMMA: 0.999 GAE_LAMBDA: 0.8 CLIP_EPS: 0.2 ENT_COEF: 0.002 VF_COEF: 0.5 MAX_GRAD_NORM: 1.0 NUM_ENVS: 1024 TOTAL_TIMESTEPS: 1e9 Observation Processing - Uses CraftaxSymbolicEnvNoAutoReset (symbolic observations, not pixels) - Observations flattened and fed directly to transformer encoder (Dense layer) - No CNN preprocessing - symbolic state is already a feature vector - Uses OptimisticResetVecEnvWrapper for efficient batched resets Rewards - Standard Craftax episode rewards (no reward shaping) - No intrinsic motivation (unlike baselines in paper) - No curriculum learning/UED (unlike baselines) Results Normalized Return: 18.3% (vs 15.3% for PPO-RNN baseline @ 1e9 steps) Key achievements: - Reached 3rd level (The Sewer) - not achieved by any baseline - High "enter gnomish mine" success rate (much higher than PPO-RNN even at 10e9 steps) - Several advanced achievements unlocked - not reached by any baseline - At 4e9 steps: 20.6% normalized return Training: 6h30 on single A100 for 1e9 steps (1024 envs)

Cleaner impl, copy-pasteable model: https://github.com/subho406/agalite/blob/main/src_pure/models/gtrxl.py